How Optimizing Computer Performance Improves AI Applications and Workflow Efficiency

Understand how upgrading hardware and optimizing systems improves AI application performance and operational efficiency.

Artificial intelligence is no longer a niche technology reserved for research labs or enterprise data teams. Across North America, AI tools are now embedded in everyday workflows, increasing the need to understand how to fix computers for AI applications performance as system demands grow. Businesses rely on automation software to streamline operations, marketers use generative AI systems to create content, analysts depend on predictive platforms to interpret data, and remote professionals integrate AI assistants into daily productivity routines.

As AI becomes more central to how work gets done, the performance demands placed on computers have increased significantly. While many AI systems operate partially in the cloud, local hardware still plays a critical role in ensuring smooth operation. Processing power, memory capacity, storage speed, and overall system optimization directly influence how efficiently AI tools run.

When systems are not optimized, users experience lag, instability, and workflow disruption. Over time, these inefficiencies undermine the productivity gains AI is designed to provide. Understanding how computer performance affects AI applications allows individuals and organizations to build more reliable, scalable digital environments.

Why AI Workloads Are More Demanding Than Traditional Software

Traditional applications typically perform predictable, repetitive tasks. AI-driven systems are different. They analyze patterns, process large datasets, render complex outputs, and manage conditional logic simultaneously.

Even browser-based AI tools can place a heavy strain on a system when multiple tabs and applications are open. For example, a professional might be:

- Running a data dashboard

- Generating reports with AI

- Syncing cloud storage

- Participating in video calls

- Managing automation scripts

Individually, these tasks may seem manageable. Combined, they create sustained computational demand. If hardware resources are limited, the system begins to slow down.

This is why optimizing performance is not just about speed; it’s about maintaining stability under load.

The Role of Processing Power

The central processing unit (CPU) is responsible for executing instructions and managing active processes. AI tools frequently require parallel processing, where multiple operations run simultaneously.

Modern multi-core processors improve performance by distributing workloads efficiently. When users rely heavily on AI tools, especially alongside other software, a stronger processing capability reduces bottlenecks and prevents system freezing.

Signs that processing power may be insufficient include:

- Delayed response when switching applications

- Slow rendering of AI-generated outputs

- Frequent fan noise due to overheating

- Noticeable lag during multitasking

Upgrading to a more capable processor or ensuring existing resources are not overloaded can significantly improve workflow efficiency.

Memory (RAM) and Workflow Stability

Random Access Memory (RAM) determines how much active information a system can handle at once. AI applications often load large volumes of data into memory while processing.

When RAM is insufficient, the system relies on disk-based virtual memory, which is significantly slower. This shift causes lag, reduced responsiveness, and occasional crashes.

For professionals working with AI daily, higher memory capacity allows smoother multitasking. It reduces the need to constantly close programs to maintain performance. Instead of limiting workflow flexibility, optimized memory supports simultaneous use of analytics platforms, communication apps, generative tools, and automation systems.

Memory upgrades are often one of the most effective and affordable ways to improve AI-related performance.

Storage Speed and Data Responsiveness

Storage technology influences how quickly data is accessed and saved. Traditional hard disk drives (HDDs) rely on mechanical movement, which limits speed. Solid-state drives (SSDs), particularly NVMe models, use flash memory for significantly faster data access.

AI systems often create temporary files, cache information, and process large datasets. Faster storage reduces:

- Application startup time

- File loading delays

- Save/export lag

- System boot time

While storage upgrades do not increase processing capacity directly, they dramatically improve overall responsiveness, making AI workflows feel smoother and more efficient.

GPU Acceleration and Specialized AI Tasks

For certain workloads, graphics processing units (GPUs) play a critical role. GPUs are optimized for handling large numbers of mathematical calculations simultaneously, making them ideal for tasks such as image generation, video rendering, and machine learning model training.

Creative professionals using AI-powered design tools often benefit from capable GPUs. Rendering times decrease, previews load faster, and visual outputs generate more efficiently. Businesses working with predictive modelling may also see improved performance with GPU acceleration.

Not all users require high-end graphics hardware. However, understanding whether your AI workload includes visual or computationally intensive tasks helps determine whether GPU upgrades are necessary.

System Optimization and Maintenance

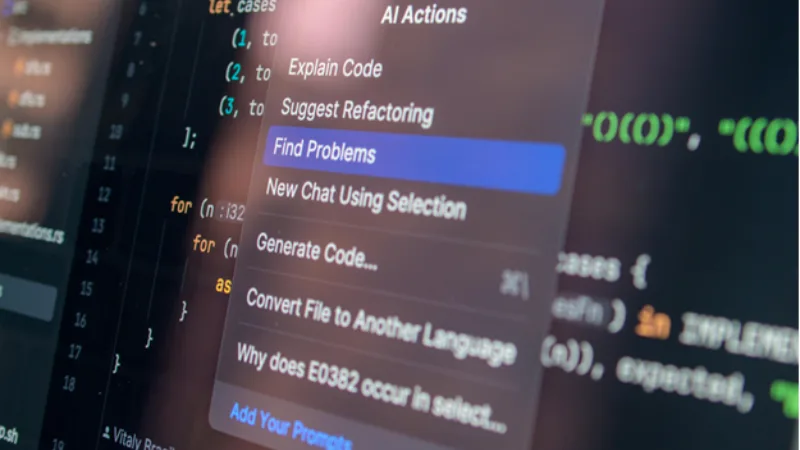

Hardware is only part of the equation. Over time, computers accumulate background processes that quietly consume resources. Startup programs, outdated drivers, and unnecessary applications can reduce available capacity.

Routine system maintenance improves performance without requiring major upgrades. This includes:

- Reviewing and limiting startup programs

- Keeping operating systems updated

- Removing unused applications

- Monitoring system resource usage

Optimized systems maintain consistent performance levels, reducing unexpected slowdowns during critical tasks.

Network Performance and Cloud-Based AI

Although many AI tools process data in the cloud, network performance directly affects responsiveness. Slow or unstable internet connections introduce delays in data synchronization, cloud rendering, and collaborative workflows.

Stable, high-speed connectivity ensures that cloud-based AI applications operate smoothly. For businesses, evaluating bandwidth capacity and minimizing network congestion supports uninterrupted AI usage.

Designing Efficient AI Workflows

Beyond hardware and connectivity, workflow design plays a major role in efficiency. Users who rely on AI heavily benefit from structuring tasks strategically. Closing unused tabs, staggering intensive processes, and organizing file systems can reduce system strain.

Efficiency comes from alignment between hardware capability and usage patterns. When systems are optimized and workflows are intentional, AI tools deliver consistent productivity gains.

Long-Term Scalability

AI software continues evolving rapidly. Models are becoming more sophisticated, datasets are larger, and automation is more complex. Systems that barely meet today’s requirements may struggle with future updates.

Planning for scalability involves selecting hardware that allows upgrades and monitoring performance trends over time. Rather than waiting for significant slowdowns, proactive optimization ensures long-term stability.

Performance and Productivity Moving Forward

Artificial intelligence has transformed how individuals and businesses operate, but its effectiveness depends heavily on the computing environment supporting it. Processing power, memory capacity, storage speed, graphics capability, and system maintenance all contribute to reliable AI performance.

Optimizing computer performance enhances responsiveness, reduces downtime, and supports sustainable workflow efficiency. As AI tools continue to expand in capability and complexity, maintaining well-configured and scalable systems will remain essential for maximizing productivity and long-term technological resilience.