How AI Music Generator Is Quietly Changing Creative Ownership

How AI music generators are reshaping creative ownership, raising questions around copyright, originality, and the future of music creation.

The gap between having a musical idea and actually turning it into a finished track has always been larger than people admit. Most ideas never become songs—not because they lack creativity, but because they lack execution. When I first explored AI Music Generator, what stood out was not just speed, but how it compresses that gap into something almost frictionless.

In traditional workflows, ideas pass through layers: composition, arrangement, production, recording. Each layer introduces both refinement and delay. What this system seems to do instead is reinterpret the entire process as a single translation step—from language to sound. That shift feels subtle at first, but it changes how we think about authorship.

Why Music Creation Used To Be Structurally Limited

Access Was Always The Hidden Constraint

Historically, making music required:

- Instrument proficiency

- Recording tools

- Mixing and mastering knowledge

Even digital tools didn’t remove complexity; they redistributed it. DAWs lowered the barrier, but they didn’t eliminate the learning curve.

Ideas And Execution Were Never Equal

Most creators can imagine:

- A melody

- A mood

- A lyrical theme

But very few can translate those into structured audio. This imbalance is where most creative loss happens.

How Language Becomes Music Inside The System

From Words To Musical Parameters

When you input a prompt or lyrics, the system appears to interpret:

- Emotional tone → key and harmony

- Descriptive words → instrumentation

- Rhythm in language → tempo and phrasing

In my testing, phrases like “melancholic piano” consistently leaned toward slower tempos and minor tonalities. That consistency suggests a stable mapping between language and musical structure.

Structural Awareness Beyond Surface Generation

The system doesn’t just generate sound—it organizes it:

- Verses remain restrained

- Choruses become more dynamic

- Transitions feel intentional

This indicates that generation is not random layering, but guided sequencing.

What Actually Happens When You Input Lyrics

Semantic Parsing Of Lyrics

When using lyrics, the system seems to:

- Identify emotional arcs

- Detect repetition patterns

- Segment lines into musical phrasing

This allows it to align melody with meaning rather than treating lyrics as static text.

Voice And Delivery Modeling

The generated vocals are not merely synthetic tones. They attempt:

- Timing alignment with syllables

- Emotional emphasis

- Dynamic variation

While not indistinguishable from human singers, the output in many cases feels structurally “correct,” which matters more than perfect realism.

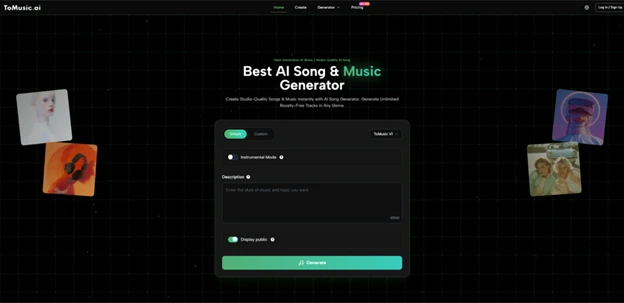

How The Creation Flow Works In Practice

Step 1: Define A Musical Intent

You begin with either:

- A descriptive prompt

- Or full lyrics

The system relies heavily on this step, so specificity tends to improve results.

Step 2: Select Style And Parameters

Options typically include:

- Genre

- Mood

- Vocal presence

These act as constraints rather than detailed controls.

Step 3: Generate And Evaluate Output

The system produces a complete track, including:

- Arrangement

- Instrumentation

- Vocals (if enabled)

Multiple generations are often necessary to refine results.

Comparing Traditional Production And AI Workflow

| Aspect | Traditional Workflow | AI-Based Workflow |

| Entry Barrier | High (skills required) | Low (text input) |

| Time To Output | Hours to weeks | Minutes |

| Creative Control | Detailed but complex | Simplified but abstract |

| Iteration Speed | Slow | Fast |

| Required Tools | Multiple (DAW, plugins) | Single interface |

The difference is not just efficiency—it’s how decisions are made. Traditional production emphasizes precision; AI generation emphasizes iteration.

Where Lyrics to Music AI Changes The Equation

At a certain point in testing, the most noticeable shift came from using Lyrics to Music AI. Instead of starting with abstract prompts, you begin with narrative content.

This changes the workflow in two important ways:

- The structure is no longer inferred—it is guided by text

- Emotional direction becomes more consistent across the track

In practice, this often results in songs that feel more cohesive, even if they are not technically perfect.

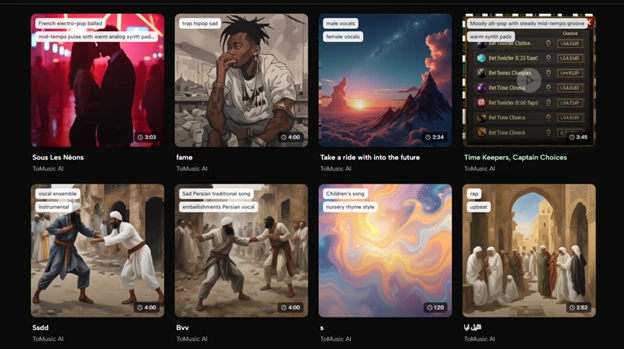

Real Usage Contexts That Reveal Its Value

Content Creation At Scale

For video creators:

- Background music can be generated quickly

- Styles can be matched to specific content themes

This reduces reliance on stock libraries.

Rapid Prototyping For Musicians

For songwriters:

- Ideas can be tested instantly

- Different arrangements can be explored without manual production

This turns the tool into a sketching environment.

Experimental Storytelling

For narrative projects:

- Lyrics-driven generation allows direct translation from story to sound

- Emotional pacing can be explored through multiple iterations

Where The System Still Shows Limitations

Prompt Sensitivity

Results depend heavily on input quality:

- Vague prompts produce generic outputs

- Overly complex prompts can confuse structure

Inconsistency Across Generations

Even with identical inputs:

- Outputs may vary significantly

- Some versions feel more coherent than others

This reinforces the need for iteration.

Human-Level Nuance Is Not Fully Replicated

While structure is strong:

- Subtle emotional delivery is not always consistent

- Vocal expression can feel slightly uniform

Why This Feels Like A Shift, Not A Tool

What stands out is not just the functionality, but the conceptual change:

- Music is no longer bound to skill acquisition

- Creation becomes closer to description

This does not replace traditional production. Instead, it introduces a parallel path—one where ideas are not filtered by technical ability.

What This Means For Creative Ownership Going Forward

The more time I spent using systems like this, the more it felt like authorship is being redefined. If a song can emerge directly from language, then:

- The role of the creator shifts from producer to director

- The barrier between imagination and output becomes thinner

That doesn’t necessarily make music easier. It makes it different.

And perhaps more importantly, it changes who gets to participate.